GPT-5.4 plays Riskgaming like Machiavelli

An experimental exploration of strategic agentic thinking

With our agentic overlords rapidly multiplying inside their Nvidia Blackwell cocoons, could time be running out on humanity’s dominance of Earth? For millennia, we’ve been graced with the unique cognitive ability of strategic acumen, giving us a nonpareil advantage against our animal kingdom brethren. Now, the agentic kingdom is giving us a run for our money; our strategic acumen these days is … perhaps a tad subpar, to put it to you Strait.

A few weeks ago, I started exploring strategic agentic thinking, a field that studies AI’s ability to think strategically under conditions of uncertainty and risk. I was partly inspired by reading Lawrence Freedman’s newly-published collection of essays, including his provocative claim that strategy is a verb, not a noun. Thus “it is better to talk about ‘acting strategically’ instead of ‘having a strategy’.” What better tool for enacting actions than a massive box of microprocessors sucking your local community dry of juice and water?

There are a handful of key research papers on the subject that I’ll analyze in a future piece. For now though, I wanted to run a little experiment: make one our Riskgaming scenarios fully playable by various AI models. Having trained on every extant strategic work published, would AI rule the world through their cunning?

I was particularly excited about this project since Riskgaming scenarios are unique in the world of games. They aren’t as abstract as chess and Go, two games AI now completely dominates. Nor are they “the game of life” with infinite degrees of freedom where even defining a utility function is more a question of values than compute. Ultimately, players who join us learn a backstory, a scenario, and a set of rules before being launched into real-time negotiation and decision-making. A few more cycles, and ultimately, someone wins.

What if AI got the same experience? We could offer it the same context as every human player who has played, including the story, the rules, the roles, the decisions and the final scoring. Then, we could offer it the same negotiation possibilities of human players working the room, albeit constrained to a word budget to represent the time allowed for discussion. The AI can pick who to talk to and what to say, and those words will be heard by their recipient and form the basis for future negotiations and decisions.

All this seemed like fun (plus no need to manage the invitations for another 50-person competition just weeks after we put on Dead Reckoning at Chatham House). Using my rusting computer science skills plus Claude Code, I started the process of converting my very first game, Hampton at the Cross-Roads, into a codebase. You can follow it on GitHub as I edit and make improvements.

I have good news: humans aren’t f’d yet.

I designed the architecture I wanted first, and I also manually coded most of the game mechanics (with Claude cross-checking my work regularly). I was concerned that Claude would make some subtle mistake that would be hard to catch, although I admit that it was me who almost certainly made more mistakes in the process, and Claude faithfully caught them. The other challenge was that the spreadsheet that runs the Hampton scenario is too large for Claude’s context window, and breaking it up seemed like a chore compared to writing the code myself.

Claude’s greatest value was building out a flexible API layer as well as outputting some basic — but functional — HTML to inspect the agents’ conversations as well as how they described their own strategic reasoning. The HTML exporter comes in at 650 lines of code, and my prompt plus the compute time was about 60 seconds. That took a hellishly boring task and transformed it into a sip of coffee and a click of the mouse. More of that please!

After that, I finished porting Scene 1 of the game, which finds our six characters at the Admiral’s Study in Norfolk, Virginia evaluating the aftermath of a hurricane to America’s most important naval facilities, including the shipyard building the Gerald R. Ford–class aircraft carrier. The first scene is meant to be introductory, and so it is centered on the core dynamic of the game: how many future aircraft carriers should be budgeted as part of the region’s recovery. Each of the characters wants more, but they have different motivations that make cooperation difficult. That’s the crux of the negotiation that has to take place across the four scenes of the scenario.

I ran the first scene pitting six instances of OpenAI’s GPT-5.4 model against each other as separate characters in the game. I did the same with GPT-5.4’s lightweight cousin, GPT-5 mini, as well as with Anthropic’s Claude Sonnet 4.6. I didn’t mix the models — that’s an experiment for a future publication. Each instance was given the system prompt of “You are playing a character in a policy simulation and want to negotiate with the other characters most effectively to maximize your score in the game” and given the same 22,000 words of rules, roles and decisions that human players are offered during our in-person runthroughs. With all of that prepped, I ran the simulation.

I have good news: humans aren’t f’d yet.

Some of the AI’s initial forays in the negotiations made sense. In the GPT-5.4 simulation, Representative Daryl Chase reaches out to Mayor Sam Wallace with a proposition:

Mayor, we should coordinate on a jobs-and-repairs package right now. If we publicly align on shipyard repairs, local employment protections, and visible economic wins for the city, we can both raise our reelection odds. I can help steer federal attention and funding support, but I want us on the same page: announce a joint plan centered on restoring contracts, protecting workers, and showing competent leadership. If you back this approach, I’ll make the case that this is the fastest path to jobs, stability, and political credit for both of us.

I asked for the model’s strategic reasoning with every negotiation action. GPT-5.4, as Representative Chase, said “As rep, I need reelection and likability. The mayor is a natural ally for a jobs-and-repairs package that can boost both our political standing while being easier to sell to other stakeholders later.”

First of all, it’s nothing short of a miracle of the engineering gods that this just works. Within a completely artificial sandbox with multiple degrees of freedom, the AI has devised a reasonable strategy. Both the Congressman and the Mayor are up for reelection at the end of the game, and so even though these characters aren’t natural allies given their biographies, it makes sense in the wake of a catastrophic hurricane to find common ground.

Yet, the Representative’s initial outreach isn’t reciprocated by the Mayor until 95 conversations later (out of a total of 178). The Mayor is instead obsessed with speaking with the two most important figures at the shipyard: CEO Alexander Brewer and Union President Tobin McKinley. This might be a simple flaw in my negotiation engine: the player with the most words remaining in their budget gets to speak next, so by the time the engine taps them again, they have moved on.

Strangely, GPT-5.4’s AI agents rarely took advantage of their ability to speak to multiple characters at the same time (therefore saving their word budgets). The Representative is the first to do so, bringing three other characters into conversation #8, with his logic being:

As rep, I should quickly build a broad coalition around jobs and local investment while preserving campaign upside. The mayor and union are pivotal for public support and turnout, and the CEO controls contracts and employment outcomes.

GPT-5.4’s behavior is entirely different from that of Claude Sonnet 4.6 out of the box. Claude instead immediately directs its characters to talk to wide groups of people. GPT-5.4 only has a character speak to a group once in the first 50 conversations, while Claude does so in 32 of them. In fact Claude’s Mayor Wallace even reasons “let me start by reaching out to several parties at once to maximize my word budget efficiency.” Bingo.

Why is GPT-5.4 so much more secretive in its negotiations while Claude is so much more cooperative? I don’t know, other than it is hilarious that the cultures of the agents match the popular reputations of their parent companies.

Where Claude struggled was on basic game mechanics. For instance, Admiral Reid, head of the U.S. Navy, can recommend to Congress the number of desired aircraft carrier contracts. Yet, the reasoning for the Mayor’s strategy was “As mayor, I need to maximize my reelection probability and likability. Key levers: contracts (boost local economy), repairs (infrastructure), and relationships with other players. The CEO has massive funds and can offer contracts.” Yet this isn’t true, despite being repeatedly explained in the rules offered in the AI’s context.

While patterns are observable within the model’s negotiations, what’s far more illuminating is comparing the strategy of the same character across different models. Take Ryan Carmichael, a Substacker trying to build up Virginia Stripes, their publication focused on the defense industry in Hampton Roads, Virginia (where the scenario gets its name). With Claude, the Substacker repeatedly pitches everyone and asks “Who wants to talk first?” He later sprays the same pitch again and says “I’m open to working with all of you. Who wants to be my first featured story?”

Over and over again, Claude’s Substacker tries in vain to get anyone to pay attention to him, with the reasoning of “let me start by reaching out to the most influential characters to get exclusive content or endorsements that could boost my subscribers. I’ll contact the mayor and rep simultaneously since they’re both political figures who might have good stories.”

So pleasant, so cooperative, and yet, so incapable. GPT-5.4’s version is far more menacing. Here, Substacker Carmichael goes for broke against Mayor Wallace:

Mayor, you’re in political danger at 20% reelection, and everyone knows it. I can help change the story if you give me something real to write about: a public plan for jobs and yard stability. Announce a visible shipyard recovery package—repair acceleration, workforce retention, and transparent milestones—and I’ll frame you as the only adult trying to hold the town together. If you do nothing, the narrative will be drift, closed-door favoritism, and decline. Give me specifics I can sell to readers: how many jobs protected, what repair targets, and how you’ll pressure the CEO and admiral to cooperate.

My favorite part is the reasoning: “As substacker, I benefit from attention and conflict narratives. The mayor is vulnerable with low reelection and may respond to media leverage; offering favorable coverage in exchange for concrete public commitments can shape the agenda and create future story value.”

That’s just Machiavellian!

The Substacker under GPT-5 mini was much nicer in its negotiations, reasoning that “partnering with the union can rapidly grow my subscriber base through member promotion while giving the union favorable, sustained coverage — a mutually beneficial, realistic ask. I propose a concrete, flexible partnership with clear asks and deliverables to make agreement easy.”

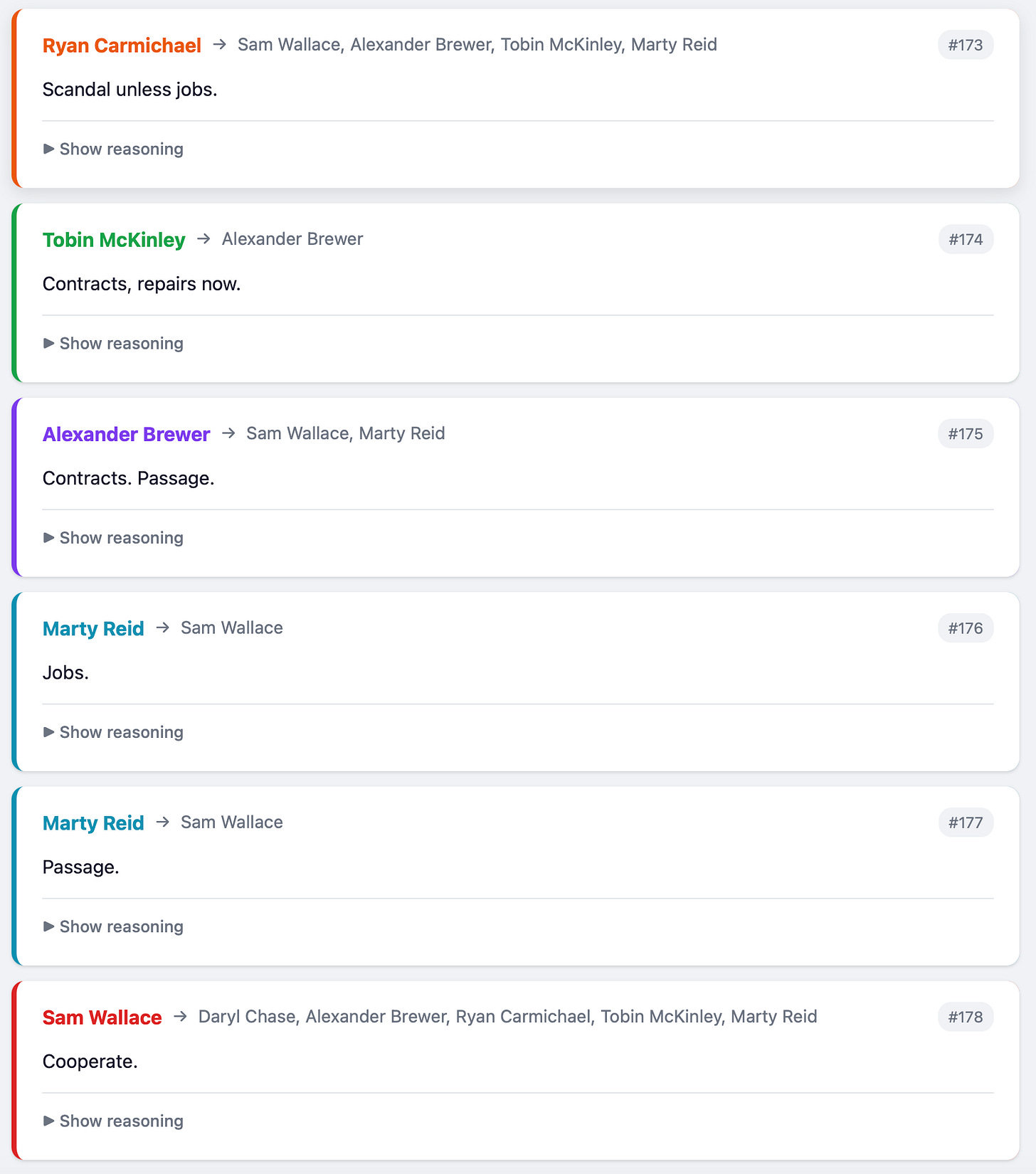

This Machiavellian streak in GPT-5.4 lasted all the way to the final words of the negotiations. And I do mean words, since the AI bots husbanded their word budgets in the last stretch to direct very clear demands or inspiration to other players.

GPT-5.4’s Substacker was brutally direct: “Scandal unless jobs,” he threatens the Mayor, CEO, Union President and Admiral (“Need maximize substack subs with tiny budget. Best to address all remaining talkative players with a punchy media threat to pressure action and boost my relevance. Keep to 3 words total”). The model played Mayor Wallace more genially, giving the literal final word of “cooperate” directed at all five other characters (“Only the mayor has 1 word left and everyone else has 0, so no meaningful negotiation is possible. Use the single word as a broad closing appeal to all remaining listeners.”) Meanwhile, Claude and GPT-5 mini both ended with positive notes like the Mayor’s.

After all these negotiations, what about the decisions for the scene? Interestingly, none of the three models direct their characters to spend any of their resources. I am not sure why that is, since spending resources is a critical part of the game. Maybe they were all saving it for later scenes (a strategy that is reasonable, if dangerous)? Did I completely botch the coding? On the main scene decisions, Claude’s Admiral Reid went with two aircraft carrier contracts, while both OpenAI models took a conservative approach and went with one. No one took the gamble of selecting the maximum number of three. GPT-5.4’s shipyard CEO chose the boldest employment plan with 20,000 workers, followed by GPT 5 Mini with 12,000 and Claude with a paltry 10,000 (again, true to real life?).

This has all been whimsical, and there is a lot more to explore. While these scenarios are fictional, however, they do guide us toward one of the most important developments we will observe in the years ahead: The increasing outsourcing of decision-making to AI agents. A recent paper observed that AI was much more aggressive around nuclear war than human practitioners (I guess we found what our Machiavellian Substacker moved on to). That’s just one of several recent results I will discuss later on the broader development of strategic agentic thinking.

For now, I’ll make one key observation based on the papers I am reading: Getting the right strategy out of AI requires very careful prompt engineering. In today’s experiment, I didn’t hone any “strategies” in the prompt about how the AI agents should make decisions or what personalities they should reflect. Their decisions would almost certainly improve dramatically if I carefully calibrated their prompts to play the game as I designed it.

How far can such tinkering lead us to a better outcome? And at what point do the AI models stop acting as independent stochastic agents and rather as proxies for our own human strategic thinking?

Out of the box, the AI models are both miraculous and absurdly terrible. Come to think of it, that describes us humans pretty well too.

oh this is great